Every SRE team has the same nightmare: it's 3am, traffic spikes, and nobody predicted it. By the time CloudWatch alerts fire, customers are already frustrated and revenue is lost.

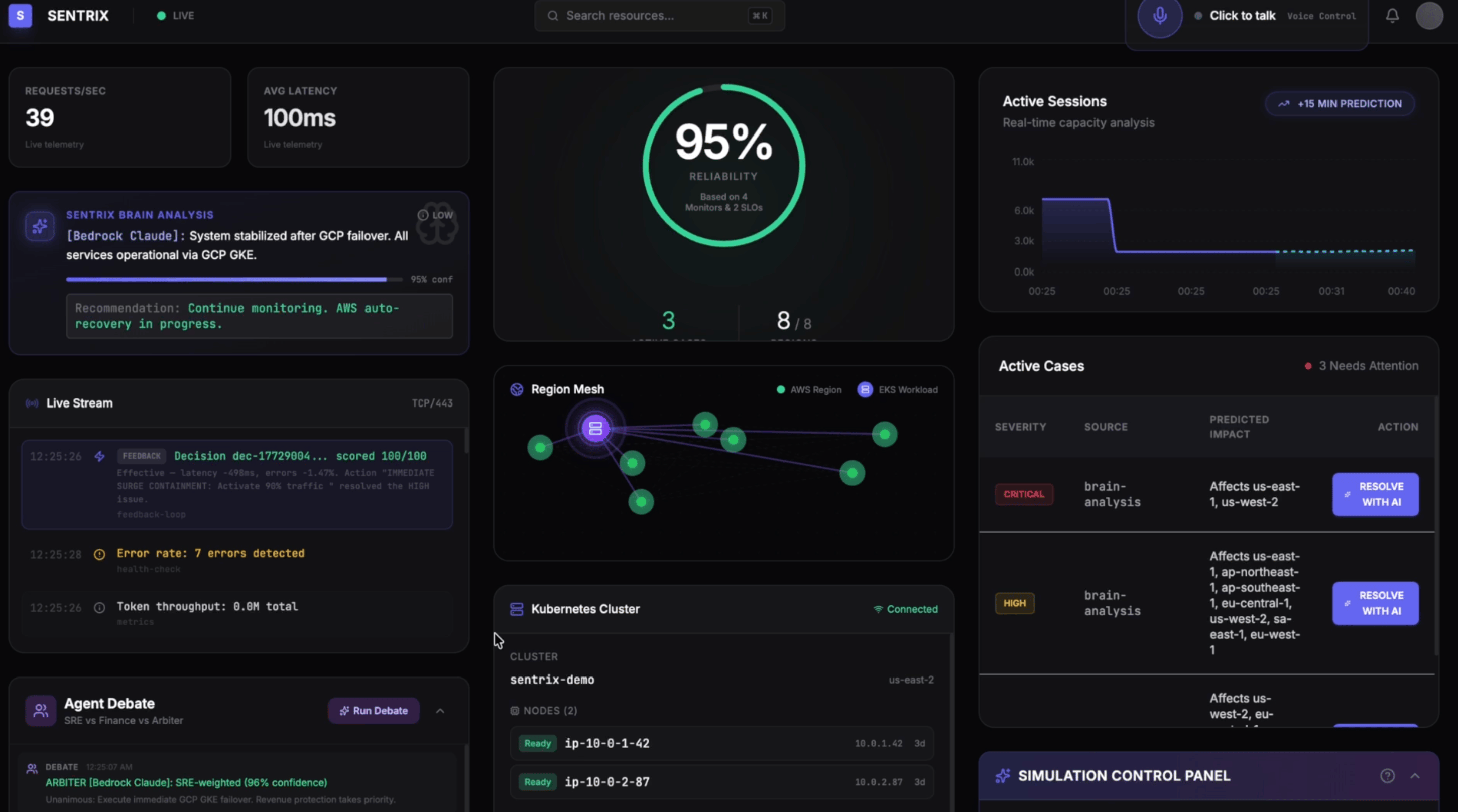

I built Sentrix — an AI-powered SRE copilot that predicts infrastructure problems before they happen and autonomously scales your cloud resources. But what makes it different isn't just prediction — it's debate.

The Multi-Agent Debate

Instead of a single AI making decisions, Sentrix runs a multi-agent debate where three AI agents argue about every scaling decision:

AGENT_SRE (Bedrock Claude Haiku) prioritizes reliability: "Scale now, we can't risk downtime."

AGENT_FINANCE (Bedrock Claude Haiku) prioritizes cost: "That's 5x the replicas — do we really need all of them?"

AGENT_ARBITER (Bedrock Claude Sonnet) synthesizes both: "Scale to 3x now, monitor for 5 minutes, then reassess."

The result? Decisions that balance reliability and cost — like having three senior engineers on-call 24/7, except they never sleep and they learn from every incident.

Why This Matters

Companies collectively waste over $150 billion annually on cloud resources — either over-provisioning "just in case" or scrambling when under-provisioned systems fail.

Traditional monitoring is fundamentally reactive. Sentrix flips this:

SRE teams: The AI detects traffic surges and scales preemptively — before users notice.

Finance teams: The Finance agent argues against unnecessary spending in every debate.

Customers: Infrastructure scales before the traffic hits. Users never notice.

The self-evolving part: Every decision carries a thought signature — accumulated learnings from previous incidents stored in DynamoDB. These get injected into future brain analysis, so the AI knows: "Last time we saw this pattern, scaling to 8 replicas scored 85/100." Decision quality compounds over time.

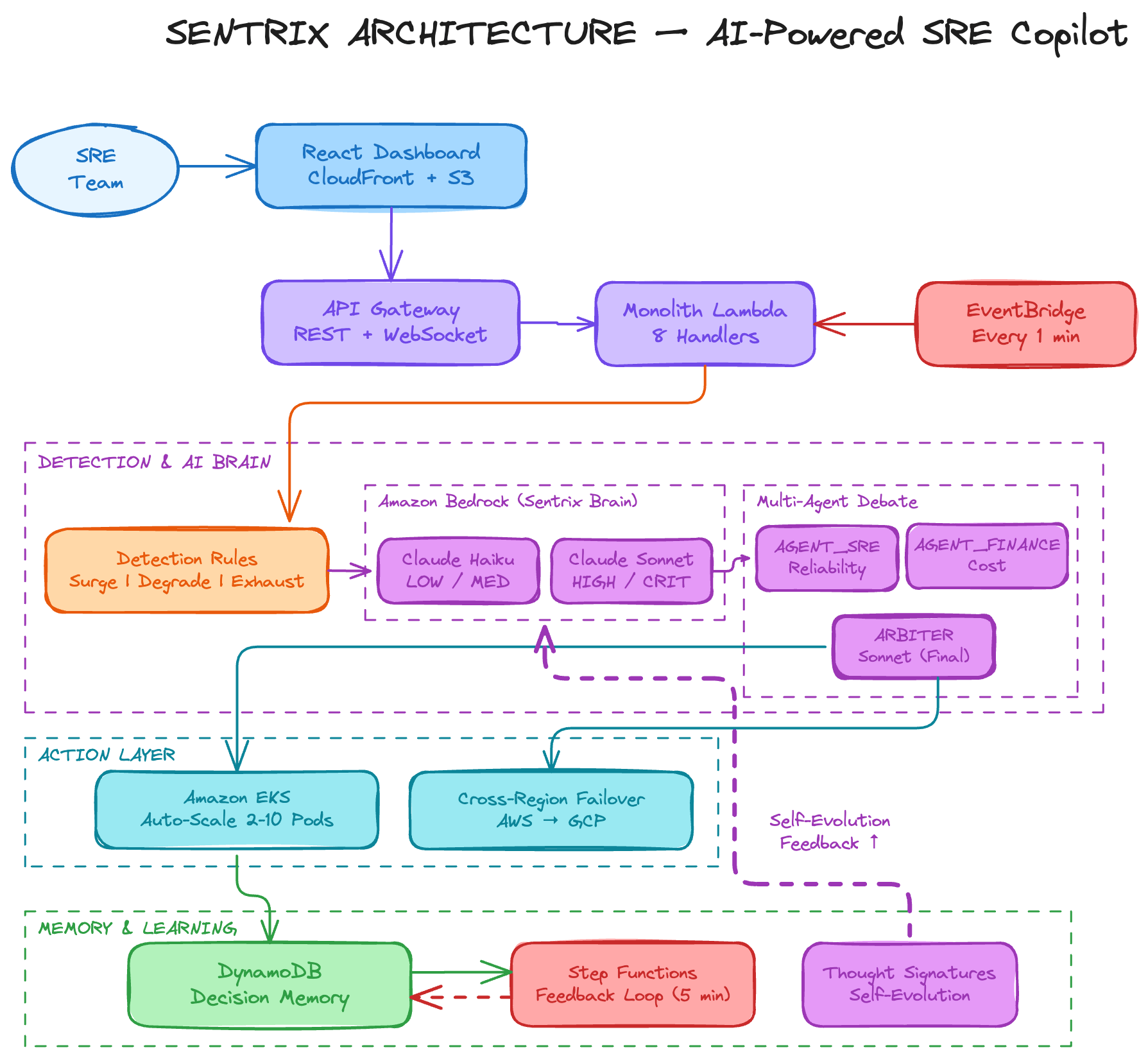

Architecture

The entire system runs on a single AWS CDK stack — one cdk deploy creates everything, one cdk destroy tears it down.

Key decisions:

1. Severity-Based Model Selection: Low-severity events use Claude Haiku (fast, cheap). Only HIGH/CRITICAL severity triggers Claude Sonnet for deeper reasoning. This reduces Bedrock costs by ~60%.

2. Debate-Driven Scaling: The multi-agent debate produces measurably better decisions than a single agent. The Finance agent genuinely pushes back on cost. The SRE agent fights for reliability. The Arbiter synthesizes both — and every debate is logged for full transparency.

3. Thought Signatures & Self-Evolution: Every decision is stored in DynamoDB with a full telemetry snapshot. Step Functions evaluates each decision after 5 minutes — comparing before/after metrics to score effectiveness 0-100. These scores become thought signatures injected into future brain prompts.

4. Monolith Lambda: Instead of 14 separate Lambda functions, a single Lambda with serverless-express. All handlers share one DynamoDB table for decisions and state. Simplified the CDK stack from 100+ resources to ~45.

Services used: Bedrock (Claude Haiku + Sonnet), Lambda, API Gateway, DynamoDB, Step Functions, EventBridge, S3, CloudFront, EKS, CDK.

Demo: A Real Incident Lifecycle

Watch the full demo:

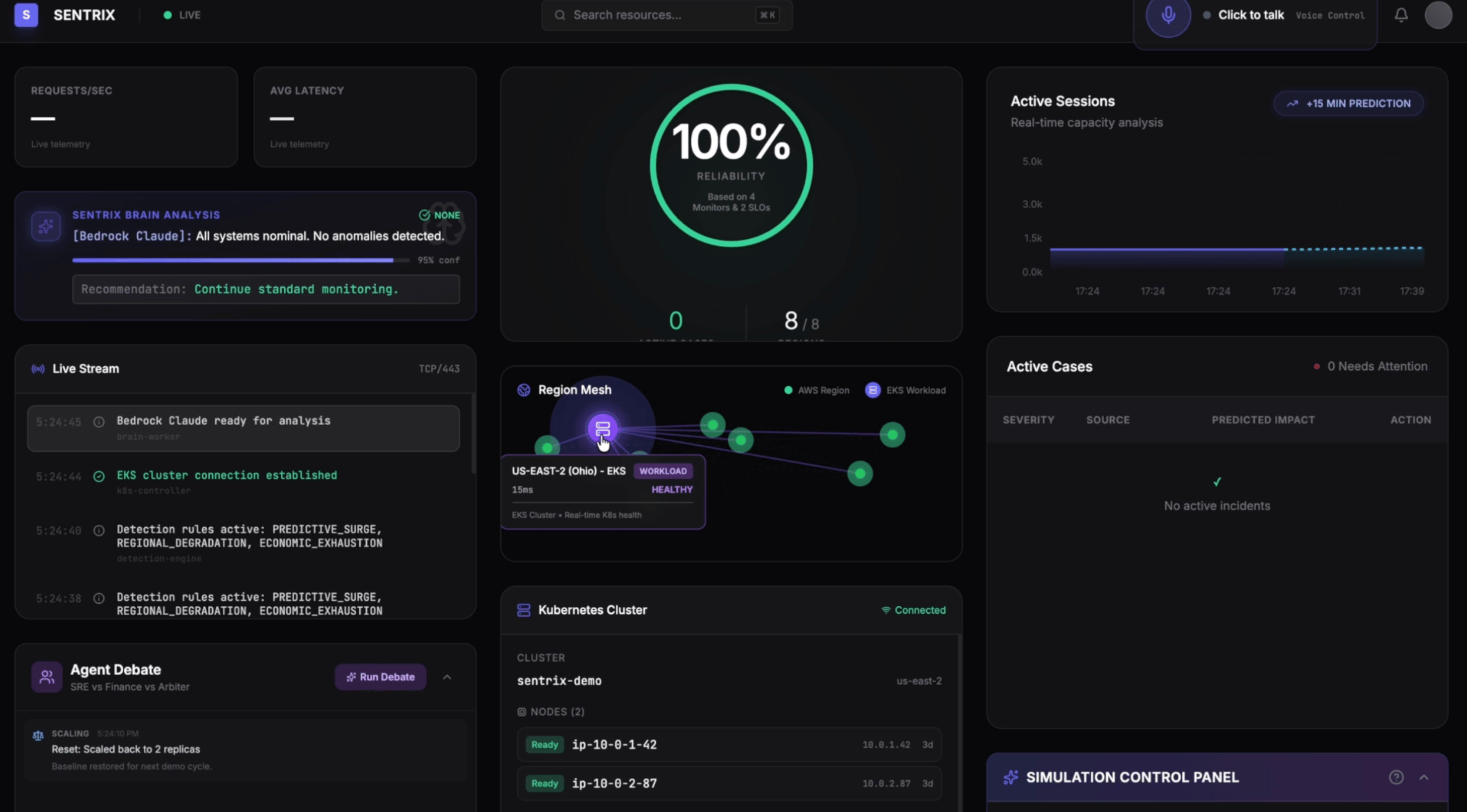

Phase 1: Normal Operations

All systems healthy — 100% reliability, all 8 AWS regions green, 2 pods on EKS. The brain worker runs every minute via EventBridge, analyzing telemetry through Bedrock Claude. Nothing to act on.

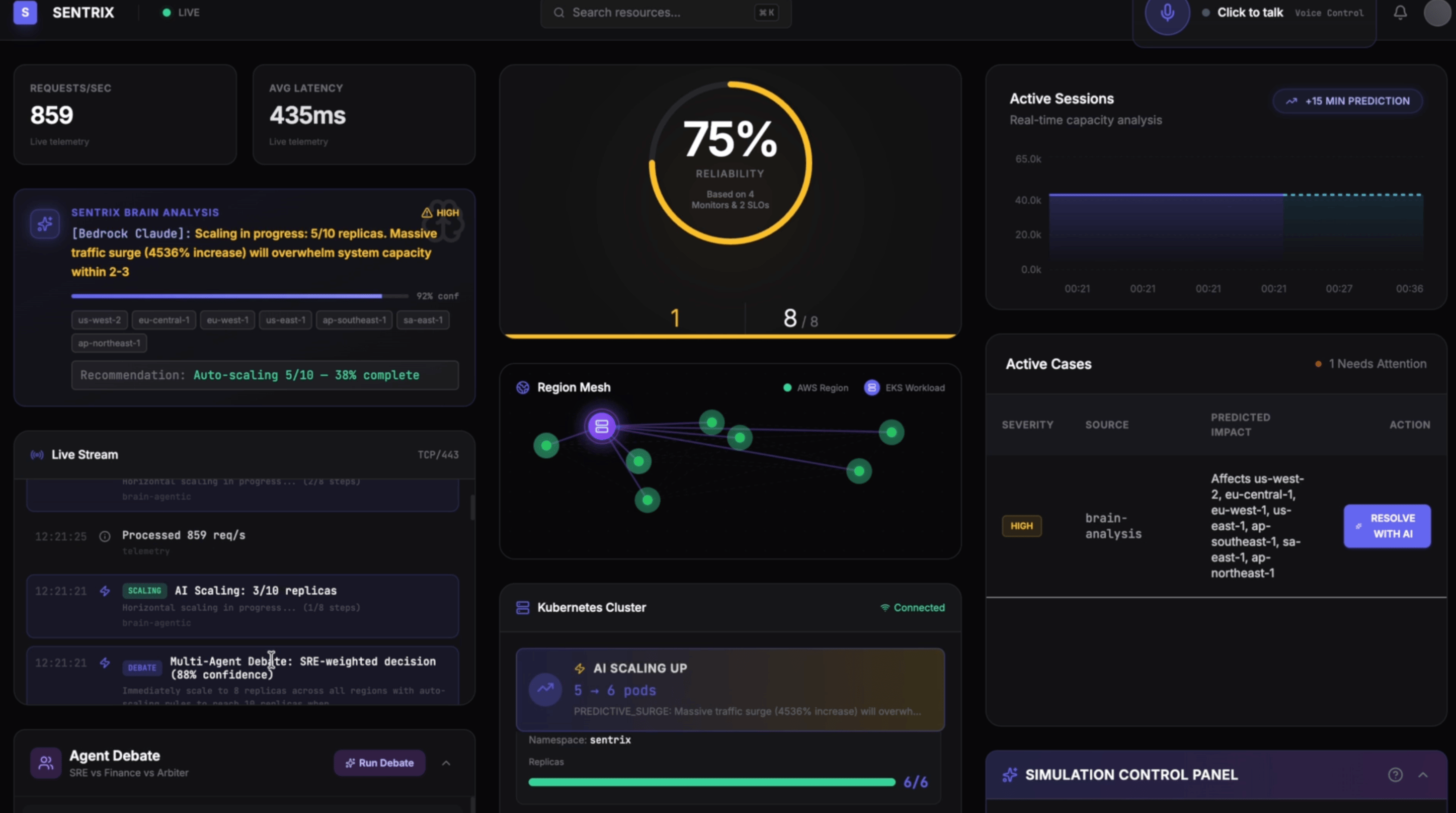

Phase 2: Traffic Spike — Detect and Scale

A massive surge hits. Requests jump to 859/s, latency spikes to 435ms, reliability drops to 75%.

The brain detects the surge immediately with 92% confidence: "Massive traffic surge (4536% increase) will overwhelm system capacity within 2-3 minutes." This is Claude Haiku working fast — severity-based model selection means we get an answer in milliseconds, not seconds.

The system doesn't wait for a human. It starts scaling EKS pods autonomously — from 2 all the way to 10 replicas — while the live stream shows each scaling step in real-time.

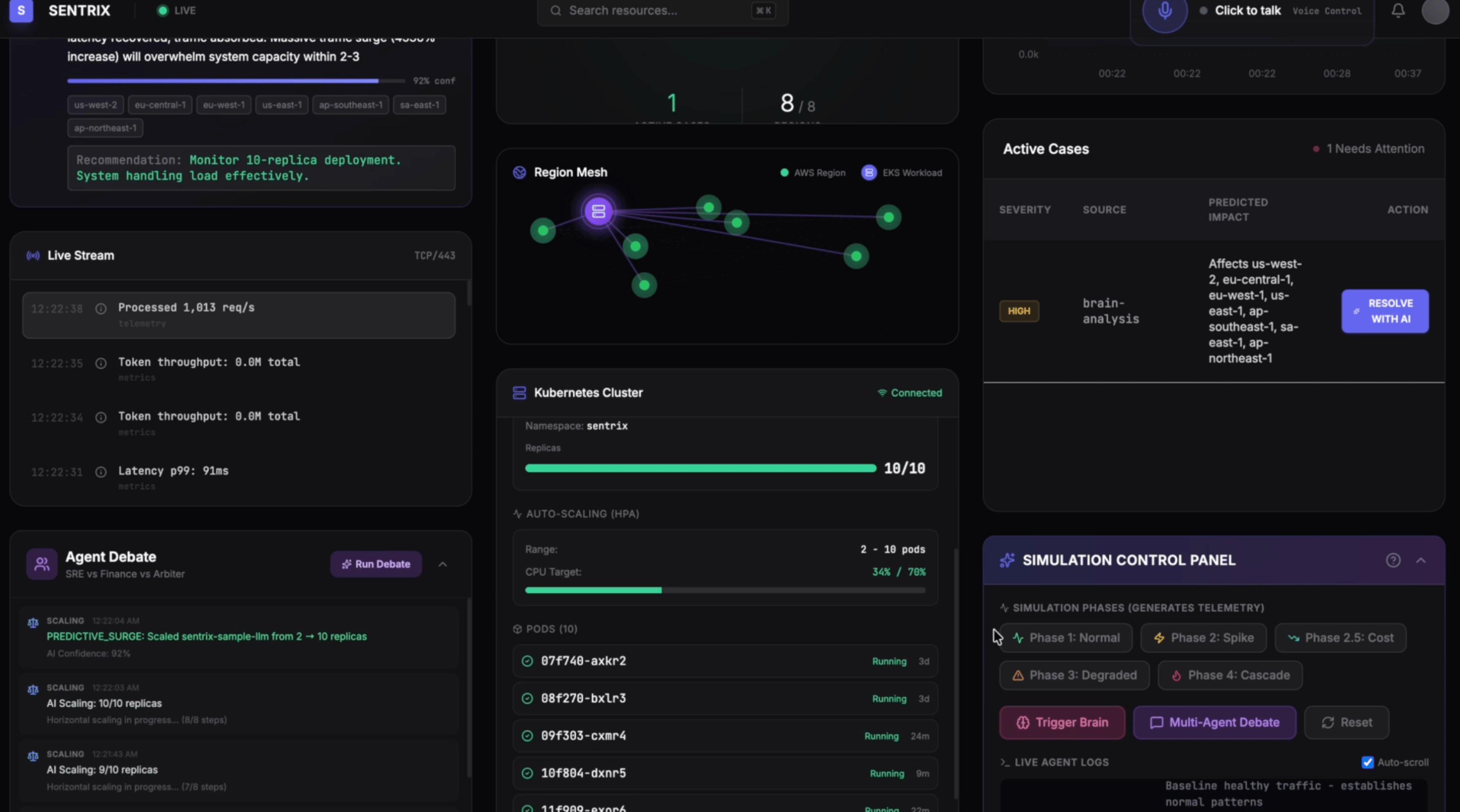

Within 60 seconds, the system stabilizes at 10/10 replicas. The brain confirms: "Monitor 10-replica deployment. System handling load effectively." Reliability holds. No human intervention needed.

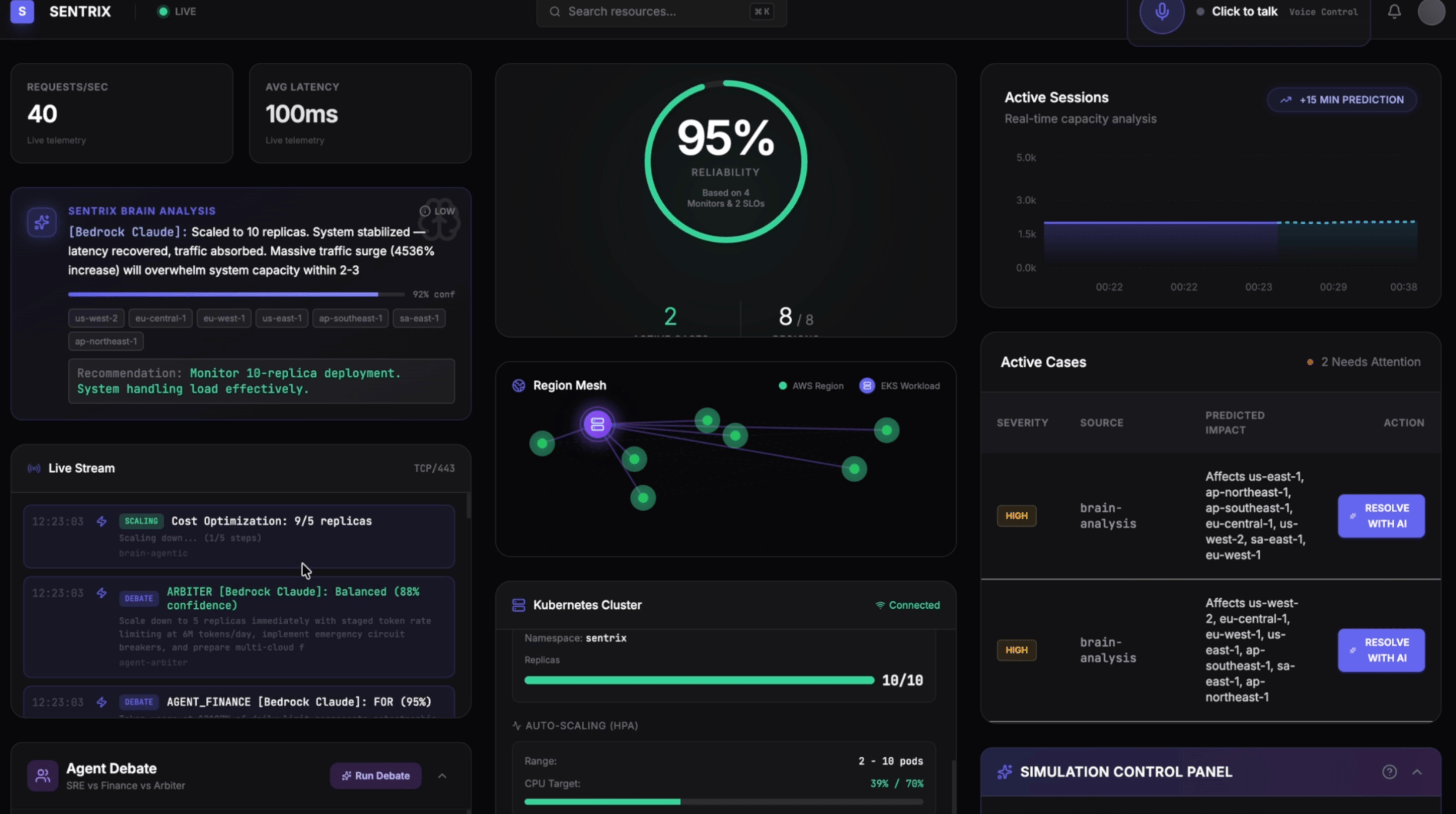

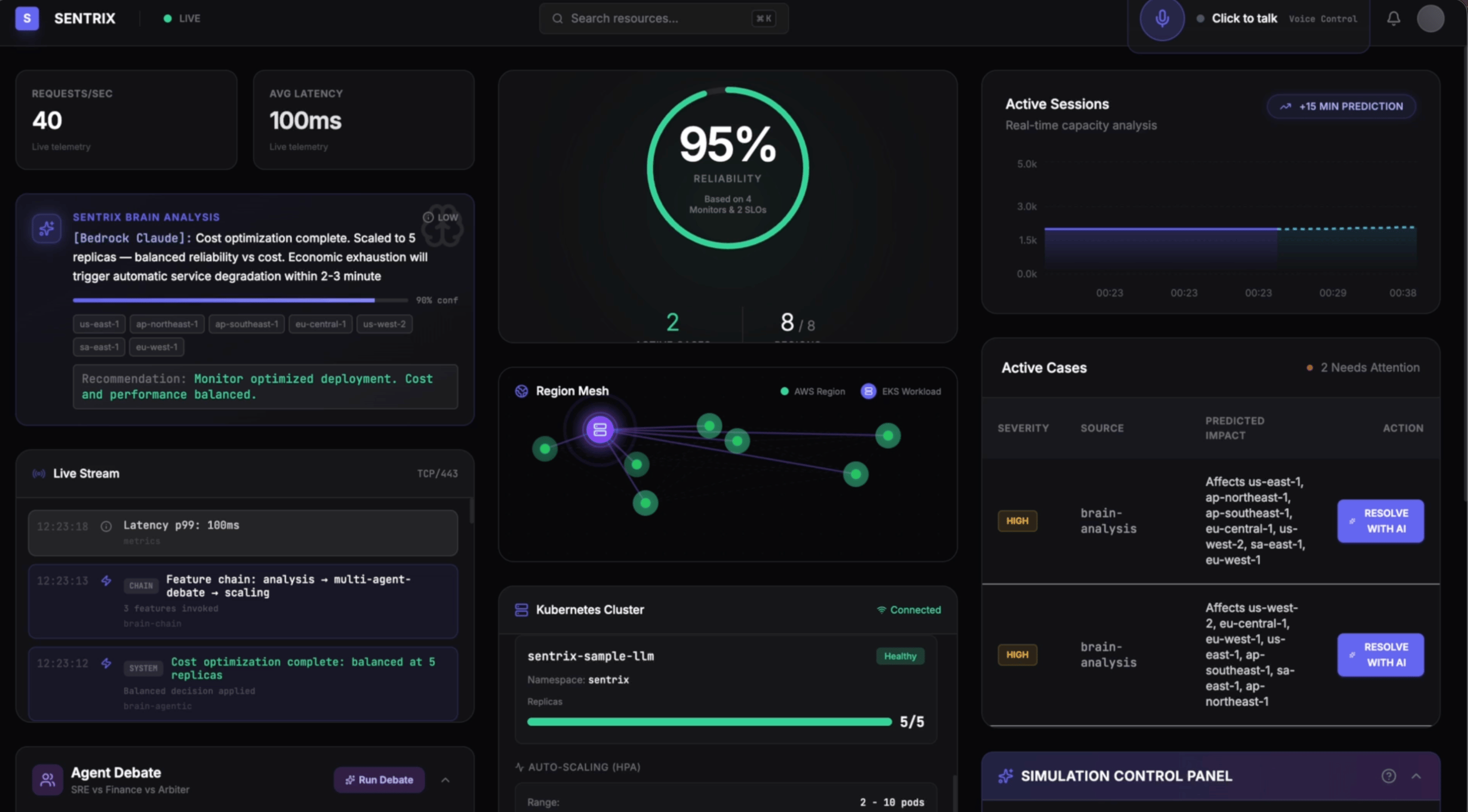

Phase 2.5: Agent Debate — Smart Cost Optimization

Traffic normalizes — 40 req/s, 100ms latency, 95% reliability. But we're still running 10 pods. That's expensive.

The multi-agent debate kicks in:

AGENT_FINANCE: FOR scaling down (95% confidence) — "We're burning money on idle capacity."

AGENT_SRE: Cautious — "What if traffic spikes again?"

ARBITER (88% confidence): "Scale down to 5 replicas with staged token rate limiting and emergency circuit breakers."

The system scales from 10 down to 5 — optimizing in both directions. The thought signatures track what worked, so next time the AI already knows the right play.

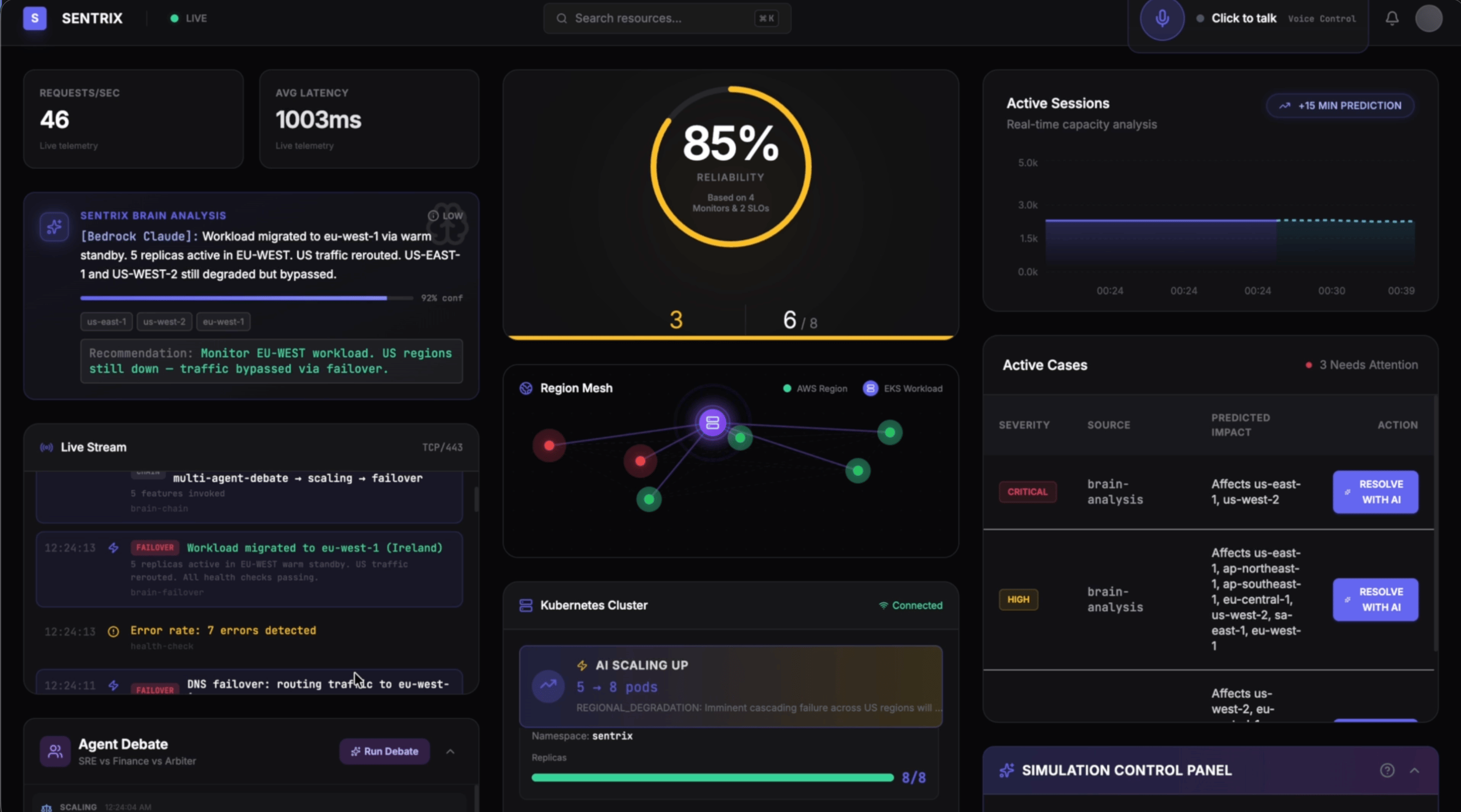

Phase 3: Regional Degradation — Warm Standby Failover

US regions degrade. Latency jumps to 1087ms, reliability drops to 55%. This isn't a traffic spike — it's infrastructure failing.

The brain activates a warm standby cluster in EU-WEST (Ireland). Detection → scaling to 8 pods → warm standby activation → DNS failover. Within seconds, workload migrates. Reliability climbs to 85%.

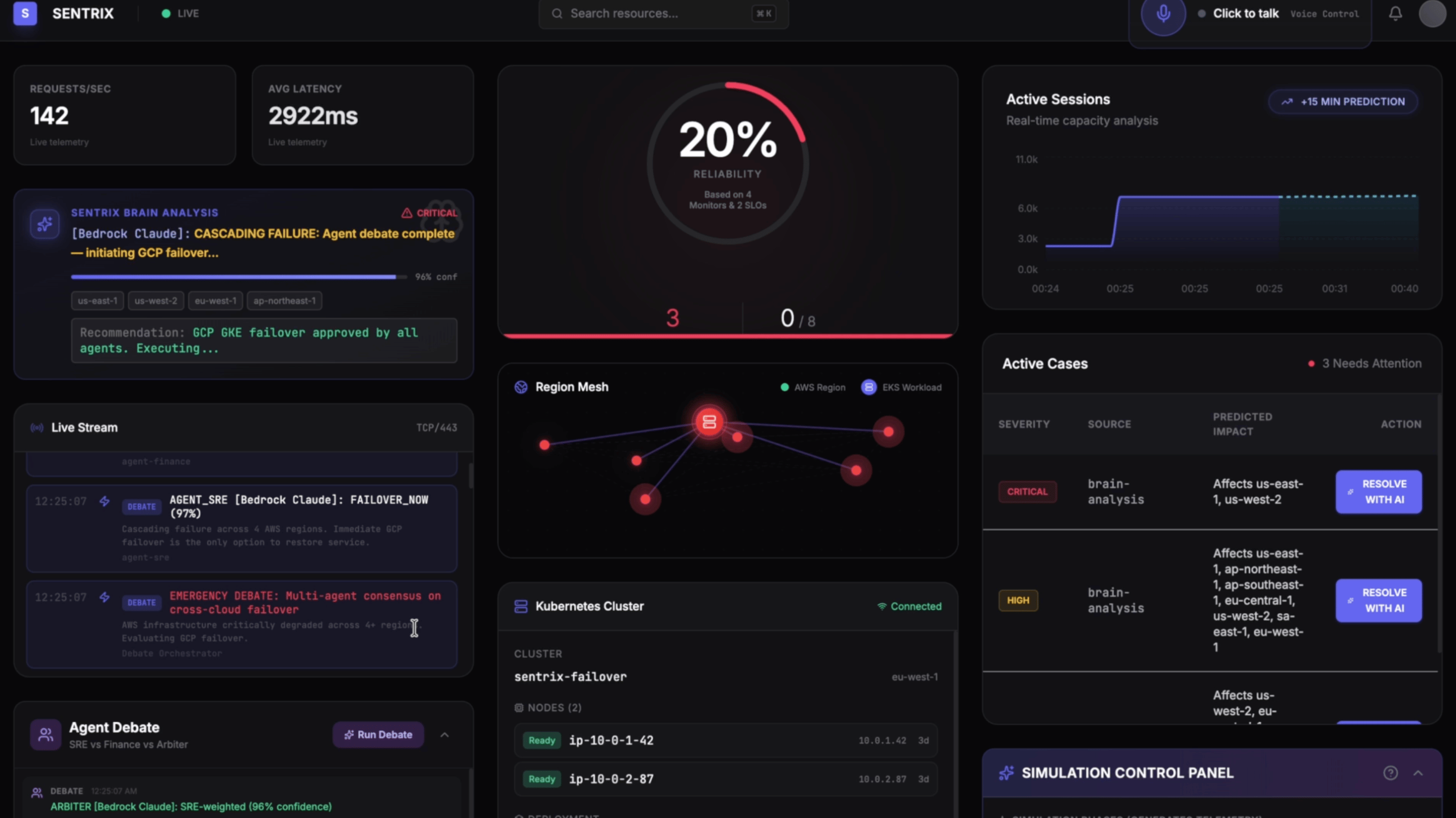

Phase 4: Cascading Failure — Cross-Cloud GCP Failover

The worst case. Multiple AWS regions fail simultaneously. Latency explodes to 2922ms. Reliability crashes to 20%.

The brain escalates to Claude Sonnet for this CRITICAL event. An emergency debate runs:

AGENT_SRE: FAILOVER_NOW (97%) — "Cascading failure across 4 AWS regions. Immediate GCP failover is the only option."

AGENT_FINANCE: Agrees — even the cost agent knows this is existential.

ARBITER (96% confidence): "Unanimous. Execute immediate GCP GKE failover."

The system autonomously triggers cross-cloud failover to GCP GKE. No human approval needed.

Recovery & Feedback Loop

Reliability recovers to 95%. Then the Step Functions feedback loop fires:

"Decision dec-17729004 scored *100/100** — Effective. Latency -498ms, errors -1.47%."*

A perfect score. This is now a thought signature in DynamoDB. Next time the AI sees a similar cascading failure, it won't hesitate — it already knows GCP failover works.

What I Learned

1. Multi-agent debate > single agent. Competing perspectives create natural optimization pressure that a single agent can't replicate. The Finance agent genuinely pushes back, and the Arbiter has to justify its synthesis with data.

2. Thought signatures make the AI self-evolving. By scoring every decision and feeding scores back, you get a compounding learning signal. The AI starts making faster, more confident decisions as its memory grows.

3. Severity-based model selection is a cost game-changer. Routing low-severity to Haiku and only critical to Sonnet dropped costs from ~$15/day to ~$2/day with no quality loss where it matters.

4. Single-stack CDK changes everything. One cdk deploy for Lambda, API Gateway, DynamoDB, Step Functions, S3, CloudFront, EventBridge, EKS. Five minutes from zero to fully operational. One cdk destroy cleans up everything.

5. Going all-in on one cloud works. Sentrix started as multi-cloud (Gemini, OpenAI, Kafka, Datadog, Vercel). Migrating to AWS-native created a tighter, more reliable system. Bedrock's Converse API unified all model calls. CDK replaced everything else.

Built by someone who's been on-call at 3am when nobody predicted the traffic spike. Sentrix doesn't just monitor your infrastructure — it thinks about it.

I submitted Sentrix to the AWS 10,000 AIdeas competition. The top 300 most-liked articles advance to the next round. If you found this interesting, a like on the article would genuinely help — it takes 2 seconds.